In the present world, our lives have become completely dependent on technology. Day by day we see an increase in the number of industries that are switching to the online platform or scaling up. Therefore, with the increase in demand, it is important to provide the user with continuous deployment to stay current with software updates.

To provide the user with the same seamless experience, there are many techniques available in the market, but picking an effective deployment strategy is an important decision for every DevOps team.

Though there are many options exist in the market and choosing one of the different deployment strategies depends on various factors.

One of them available is ‘Canary Deployment’ which can help put the best code into production as efficiently as possible. In this article, we’ll go over what canary deployments are and what they aren’t. We’ll go over the pros and cons of canary deployments, and show you how you can easily begin performing canary deployments with the team.

What is Canary Deployment?

The term “canary deployment” has an interesting history. It comes from the phrase “canary in a coal mine,” which refers to the use of small birds (canaries) by miners to detect dangerous levels of carbon monoxide underground. If the birds felt ill or died, it was a warning that odourless toxic gases, like carbon monoxide, were present in the air. From there, the idea of using the name “canary” as an early warning in the pipeline system came here.

Similar way, though the pipeline will be fully automated, detecting the number of bugs, broken features, unintuitive feature defects will be difficult until real traffic is hitting the real production servers. However, the testing environment and test cases probably don’t cover 100% of possible scenarios. Some defects will reach production. Canary deployment is one of the desirable ways for this scenario.

Canary Deployment in Software Deployments

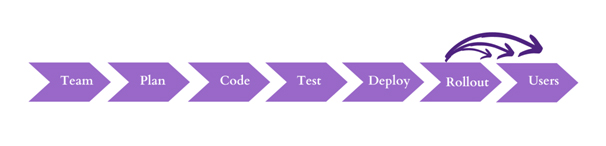

In software engineering, Canary Deployment is the practice of making staged releases. Canary deployments are best suited for teams who have adopted a continuous delivery process.

Here we roll out a software update to a small number of the users first, so they may test it and provide feedback. Once the change is accepted, the update is rolled out to the rest of the users.

One of the most effective points of using Canary deployment is that we can push new features more frequently without having to worry that any new feature will harm the experience of your entire user base.

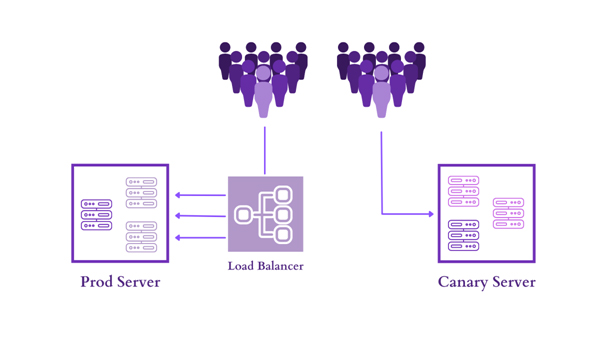

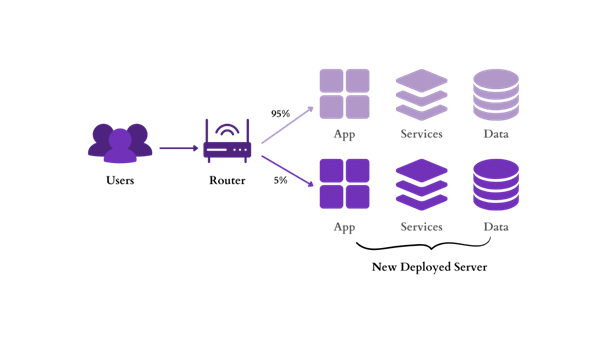

With canaries, the new version of the application is gradually deployed to the server while getting a very small amount of live traffic (i.e. a subset of live users are connecting to the new version while the rest are still using the previous version).

As we gain confidence over potential problems, security and reliability in the new code, more canaries are created and more users are now connecting to the updated version. Once the change is accepted, the update is rolled out to the rest of the users.

As we gain confidence over potential problems, security and reliability in the new code, more canaries are created and more users are now connecting to the updated version. Once the change is accepted, the update is rolled out to the rest of the users.

Steps for Canary Deployment

In a simple structure, the canary deployment has three stages.

Planning: The first step involves creating a new canary infrastructure where the latest change is deployed. A small portion of traffic is sent to the canary server, while the rest traffic continues with the prod server.

Testing: Once some traffic is diverted to the canary server, the data is collected in the form of metrics, logs, information from all network sources and later tested to determine the performance and functionality of the new canary feature are working as per expected by comparing it with the Production server.

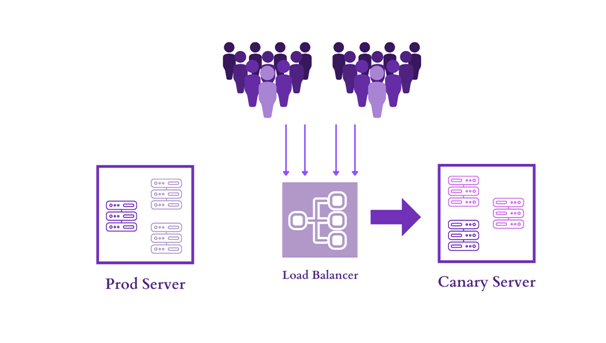

Rollout: After the canary analysis is completed, the team decides whether to move ahead with the release and roll it out for the rest of the users or roll back to the previous baseline state as per inputs received from the Testing phase.

Why Canary Deployment?

Canary Deployment includes many benefits from less down time, real world testing to quick rollback. This is one of the most widely used deployment strategies, because not only it’s quick but also provides real world testing for the application. Conversely, canary deployment is the most useful when the development team isn’t sure about the new version and they don’t mind a slower rollout if it means they’ll be able to catch the bugs.

Let’s take a look at how it works.

We can implement Canary deployment in two different ways:

-

-

- Phased deployment: The changes under phased canary deployment are installed on the server in stages or phases form(- a few at a time). During this phase, we can monitor the performance, errors, user feedback for the upgraded version on the machine. As we gain confidence in the upgraded version by performance and user perspective, we continue installing it on the rest of the machines, until they are all running the latest release

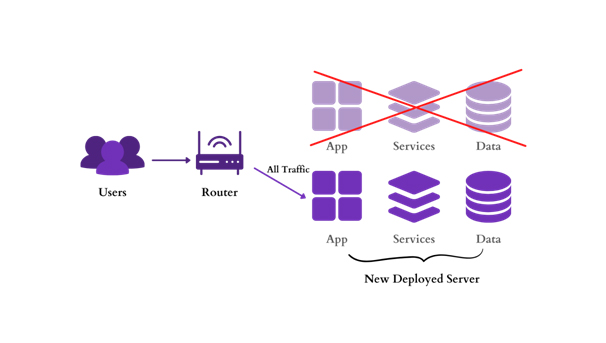

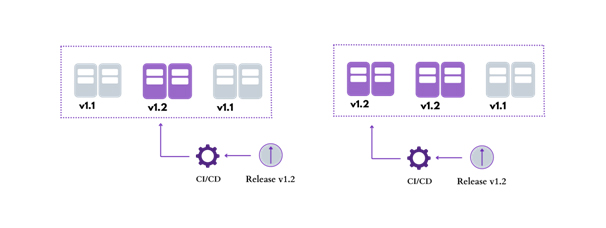

- Alongside deployment: In this, Instead of upgrading new updates or versions in phases, we clone the environment and install the canary version.Once the canary is running on the new environment, we move a portion of the user base to the new environment. This includes using a router, a load balancer, a reverse proxy, or some other business logic in the application.

We will keep a check on the canary version while we gradually migrate more and more users away from the previous version. The process continues until we either detect a problem or users notify about the same on the canary.Once the deployment is complete, we remove the old environment to free up resources. The canary version is now the new live prod environment.

We will keep a check on the canary version while we gradually migrate more and more users away from the previous version. The process continues until we either detect a problem or users notify about the same on the canary.Once the deployment is complete, we remove the old environment to free up resources. The canary version is now the new live prod environment.

Canary deployment Pros and Cons

Canary Deployment is quite an effective strategy but not always the best correct strategy in every possible scenario. Let’s go through the pros and cons to better understand canary deployment suited for all scenarios and teams.

Pros:

- Real World Testing: Since the canary server gets tested with minimal impact on user experience and infrastructure of the overall organization, developers get the confidence to innovate.

- Better Risk Management: The Canary deployment provides users with easy rollback. In case of any error with the new version, the traffic can be simply routed back to the prod server. Meanwhile, the DevOps team can work on determining the root cause and correct it before re-introducing a new update

- Independent Platform: The application consists of separate micro services that are updated independently and performance consistency can be maintained for the production server.

- Progressive Start: Canary deployments slowly build up momentum to prevent cold-start slowness. In this, we can access the new application version by gradually increasing (5%,10%.40%,70%,100%) the load on the server

Cons:

Like any complex process, the canary deployment also comes with some downsides

- Increase in Operation and overhead: For a canary deployment, we will need additional infrastructure that will run alongside your primary environment. We will need to take on some additional administration, two codebases that will run alongside the primary environment.

- Dashboard Required: Since we have two servers running with a different version of code while running the same it Requires high visibility of the user behaviour, system, and application insights with packages and code at any point of time to trigger things.

- Challenging to manage database schema changes and API versions: Managing the application version is easy while handling through canary deployment, whereas when it comes to database and API versions, it converts into a very complex deployment process.

- Cost and complexity: The cost and complexity of the duplicate environment and side-by-side deployments cost is higher because of the need for extra infrastructure and its subsequent operation cost.

Conclusion

Canary deployments are an essential part of every DevOps toolkit due to its effective strategy, fast to provision, provide meaningful data, and can be deployed for almost any app or feature we want to test.

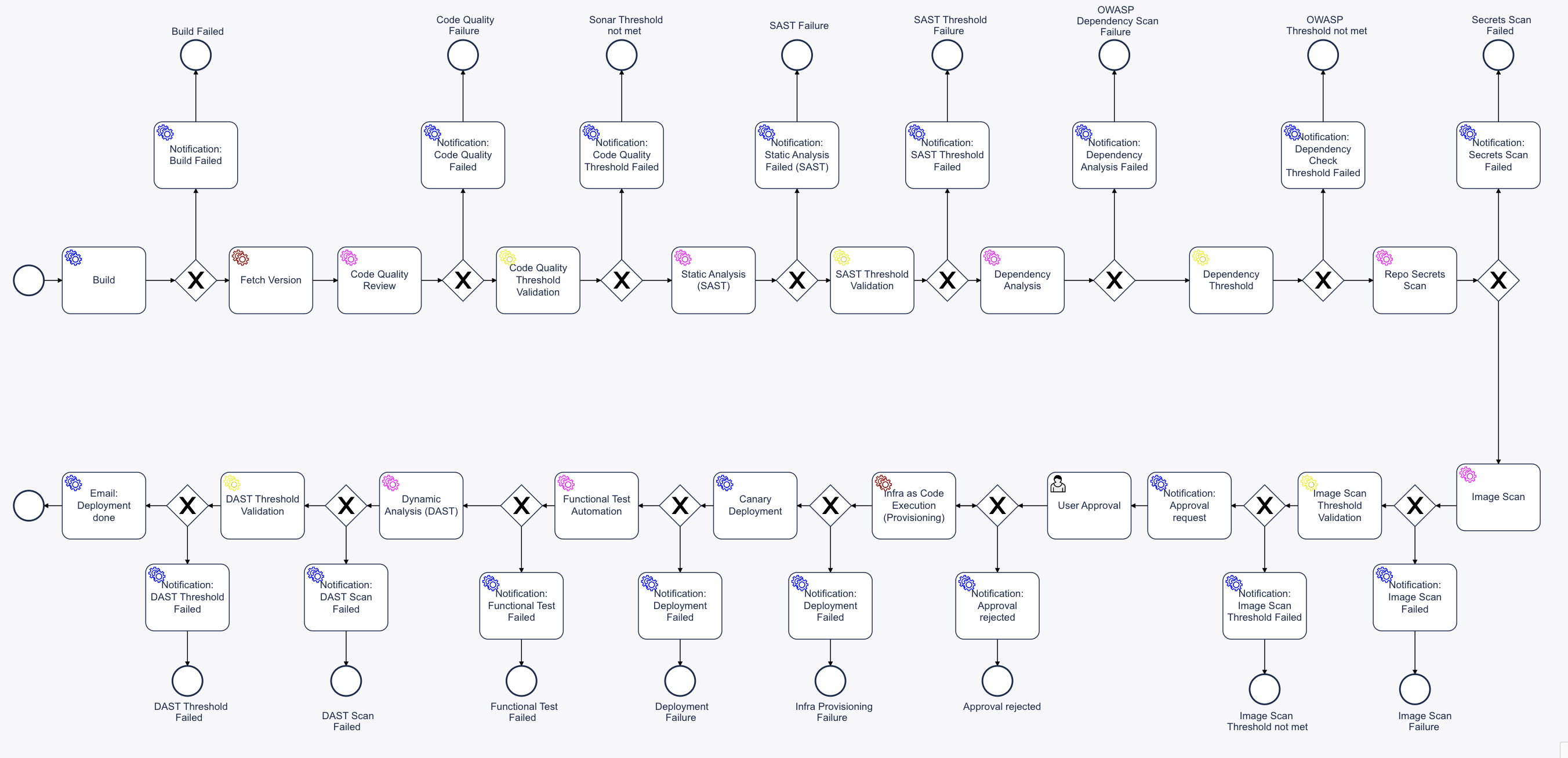

Kaiburr simplifies and accelerates advanced deployment models like canary, blue-green, rolling deployments using its Low Code DevSecOps platform. With Kaiburr advanced CI-CD automation like the sample below can be configured using its drag and drop interface in minutes.

A sample CI-CD workflow for a React JS application component is shown below –

- Phased deployment: The changes under phased canary deployment are installed on the server in stages or phases form(- a few at a time). During this phase, we can monitor the performance, errors, user feedback for the upgraded version on the machine. As we gain confidence in the upgraded version by performance and user perspective, we continue installing it on the rest of the machines, until they are all running the latest release

-

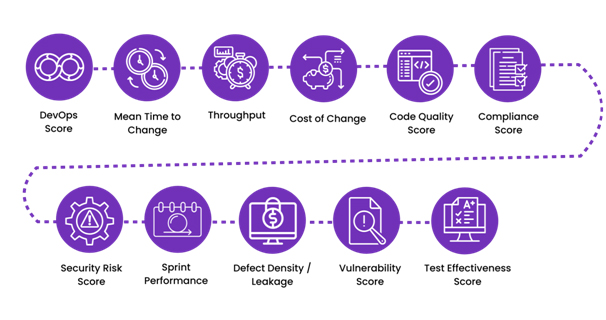

Some significant DevOps Metrics/KPIs covered in Kaiburr at Organization and portfolio levels are:

- DevOps Score

- Mean Time to Change

- Throughput

- Cost of Change

- Code Quality Score

- Compliance Score

- Security Risk Score

- Sprint Performance

- Defect Density / Leakage

- Vulnerability Score

- Test Effectiveness Score

For more information on how you can practically enable Engineering Excellence at your organization visit us at Kaiburr or sign up for a demo by contacting at contact@kaiburr.com.

We will keep a check on the canary version while we gradually migrate more and more users away from the previous version. The process continues until we either detect a problem or users notify about the same on the canary.Once the deployment is complete, we remove the old environment to free up resources. The canary version is now the new live prod environment.

We will keep a check on the canary version while we gradually migrate more and more users away from the previous version. The process continues until we either detect a problem or users notify about the same on the canary.Once the deployment is complete, we remove the old environment to free up resources. The canary version is now the new live prod environment.